Add this script to your powershell profile. If you don’t know where your powershell profile is, open a powershell session and type $profile and press <Enter>. In Windows 7, you can run powershell from the current folder by typing powershell in the address bar of windows explorer.

#Set environment variables for Visual Studio Command Prompt

$vspath = (get-childitem env:VS100COMNTOOLS).Value

$vsbatchfile = "vsvars32.bat";

$vsfullpath = [System.IO.Path]::Combine($vspath, $vsbatchfile);

#$_ shortcut represents arguments

pushd $vspath

cmd /c $vsfullpath + "&set" |

foreach {

if ($_ -match “=”) {

$v = $_.split(“=”);

set-item -force -path "ENV:\$($v[0])" -value "$($v[1])"

}

}

popd

write-host "Visual Studio 2010 Command Prompt variables set." -ForegroundColor Red

I’m trying to catalog View-ViewModel Binding Patterns so that I can compare their strengths and weaknesses. So far I can think of 2 basic ones. The names I gave them are made up. What are the others?

Name: View-Instantiation.

Description: The View directly instantiates the ViewModel and assigns it to the data context.

Sample:

<Window.DataContext>

<ViewModels:MyViewModel/>

</Window.DataContext>

Pros: Easy to set up and get going. Easy to add design-time data.

Cons: Requires default constructor on ViewModel. This makes Dependency Injection scenarios difficult.

Name: View Templates

Description: The View is chosen by a data template associated with the ViewModel type.

Pros: ViewModels fit easily into Dependency Injection scenarios.

Cons: Design-time data is more difficult to accommodate. Tooling cannot tell the type of the ViewModel associated to the View during View development.

The benefits of using NuGet to manage your project dependencies should be well-known by now.

If not, read this.

There’s one area where NuGet is still somewhat deficient: Team Workflow.

Ideally, when you begin work on a new solution, you should follow these steps:

1) Get the latest version of the source code.

2) Run a command to install any dependent packages.

3) Build!

It’s step 2 in this process that is causing some trouble. NuGet does offer a command to install packages for a single project, but the developer is required to run this command for each project that has package references. It would be nice if NuGet would install all packages for all projects using packages/repositories.config, but at the time of this writing it will not. However, it is fairly easy to add a powershell function to the NuGet package manager console that will do this for you.

First, you should download the NuGet executable and add its directory to your PATH Environment variable. I placed mine in C:devutilsNuGet<version>. You’ll need to be able to access the executable from the command-line.

Second, you need to identify the expected location of the NuGet Powershell profile. You can do this be launching Visual Studio, opening the Package Manager Console, type $profile, then press enter. The console will show you the expected profile path. In Windows 7 it will probably be: “C:Users<user>DocumentsWindowsPowerShellNuGet_profile.ps1”

Next you need to create a file with that name in that directory.

Next, paste the following powershell code into the file:

function Install-NuGetPackagesForSolution()

{

write-host "Installing packages for the following projects:"

$projects = get-project -all

$projects

foreach($project in $projects)

{

Install-NuGetPackagesForProject($project)

}

}

function Install-NuGetPackagesForProject($project)

{

if ($project -eq $null)

{

write-host "Project is required."

return

}

$projectName = $project.ProjectName;

write-host "Checking packages for project $projectName"

write-host ""

$projectPath = $project.FullName

$projectPath

$dir = [System.IO.Path]::GetDirectoryName($projectPath)

$packagesConfigFileName = [System.IO.Path]::Combine($dir, "packages.config")

$hasPackagesConfig = [System.IO.File]::Exists($packagesConfigFileName)

if ($hasPackagesConfig -eq $true)

{

write-host "Installing packages for $projectName using $packagesConfigFileName."

nuget i $packagesConfigFileName -o ./packages

}

}

Restart Visual Studio 2010. After the package manager console loads, you should be able to run the Install-NuGetPackagesForSolution command. The command will iterate over each of your projects, check to see if the project contains a packages.config file, and if so run NuGet install against the packages.config.

You may also wish to do this from the PowerShell console outside of visual studio. If you are in the solution root directoy you can run the following two commands:

$files = gci -Filter packages.config -Recurse

$files | ForEach-Object {nuget i $_.FullName -o .packages}

System.Enum is a powerful type to use in the .NET Framework. It’s best used when the list of possible states are known and are defined by the system that uses them. Enumerations are not good in any situation that requires third party extensibility. While I love enumerations, they do present some problems.

First, the code that uses them often exists in the form of switch statements that get repeated about the code base. This violation of DRY is not good.

Second, if a new value is added to the enumeration, any code that relies on the enumeration must take the new value into account. While not technically a violation of the LSP, it’s close.

Thrid, Enums are limited in what they can express. They are basically a set of named integer constants treated as a separate type. Any other data or functionality you might wish them to have has to be added on through other objects. A common requirement is to present a human-readable version of the enum field to the user, which is most often accomplished through the use of the Description Attribute. A further problem is the fact that they are often serialized as integers, which means that the textual representation has to be reproduced in whatever database, reporting tool, or other system that consumes the serialized value.

Polymorphism is always an alternative to enumerations. Polymorphism brings a problem of its own—lack of discoverability. It would be nice if we could have the power of a polymorphic type coupled with the discoverability of an enumeration.

Fortunately, we can. It’s a variation on the Flyweight pattern. I don’t think it fits the technical definition of the Flyweight pattern because it has a different purpose. Flyweight is generally employed to minimize memory usage. We’re going to use some of the mechanics of the pattern employed to a different purpose.

Consider a simple domain model with two objects: Task, and TaskState. A Task has a Name, Description, and TaskState. A TaskState has a Name, Value, and boolean method that indicates if a task can move from one state to the other. The available states are Pending, InProgress, Completed, Deferred, and Cancelled.

If we implemented this polymorphically, we’d create an abstract class or interface to represent the TaskState. Then we’d provide the various property and method implementations for each subclass. We would have to search the type system to discover which subclasses are available to represent TaskStates.

Instead, let’s create a sealed TaskState class with a defined list of static TaskState instances and a private constructor. We seal the class because our system controls the available TaskStates. We privatize the constructor because we want clients of this class to be forced to use the pre-defined static instances. Finally, we initialize the static instances in a static constructor on the class. Here’s what the code looks like:

public sealed class TaskState { public static TaskState Pending { get; private set; } public static TaskState InProgress { get; private set; } public static TaskState Completed { get; private set;} public static TaskState Deferred { get; private set; } public static TaskState Canceled { get; private set; } public string Name { get; private set; } public string Value { get; private set; } private readonly List<TaskState> _transitions = new List<TaskState>(); private TaskState AddTransition(TaskState value) { this._transitions.Add(value); return this; } public bool CanTransitionTo(TaskState value) { return this._transitions.Contains(value); } private TaskState() { } static TaskState() { BuildStates(); ConfigureTransitions(); } private static void ConfigureTransitions() { Pending.AddTransition(InProgress).AddTransition(Canceled); InProgress.AddTransition(Completed).AddTransition(Deferred).AddTransition(Canceled); Deferred.AddTransition(InProgress).AddTransition(Canceled); } private static void BuildStates() { Pending = new TaskState() { Name = "Pending", Value = "Pending", }; InProgress = new TaskState() { Name = "In Progress", Value = "InProgress", }; Completed = new TaskState() { Name = "Completed", Value = "Completed", }; Deferred = new TaskState() { Name = "Deferred", Value = "Deferred", }; Canceled = new TaskState() { Name = "Canceled", Value = "Canceled", }; } }

This pattern allows us to consume the class as if it were an enumeration:

var task = new Task() { State = TaskState.Pending, }; if (task.State.CanTransitionTo(TaskState.Completed)) { // do something }

We still have the flexibility of polymorphic instances. I’ve used this pattern several times with great effect in my software. I hope it benefits you as much as it has me.

Answer: When you have to spend too long figuring out what the code does.

Consider the following partial implementation of a ShoppingCart class.

public class ShoppingCart { public ShoppingCart() { this._readOnlyDetails = new ReadOnlyCollection<OrderDetail>(_details); } private readonly List<OrderDetail> _details = new List<OrderDetail>(); private readonly ReadOnlyCollection<OrderDetail> _readOnlyDetails; public ReadOnlyCollection<OrderDetail> Details { get { return _readOnlyDetails; } }

Our ShoppingCart class is simply hiding the internal storage mechanism of Details so that clients of ShoppingCart cannot add or remove products directly. For this reason, ShoppingCart must provide its own methods for modifying the OrderDetails collection. In this post I implemented this method and applied some heavy refactoring. Don’t read the implementation just yet.

Consider the requirements: When adding a product to the shopping cart, I must provide an OrderDetail that indicates the quantity of that product that has been ordered. Subsequent additions of the same product to the shopping cart should not result in additional order details. Rather, the existing OrderDetail should reflect the sum of all quantities of the product being ordered.

Imagine that you open the source file to see how this is done, but you have all the outlining collapsed so that all you see is method signatures. Imagine that these are the method signatures you see:

public void AddProduct(Product product, int quantity) private OrderDetail FindOrCreateDetailForProduct(Product product) private OrderDetail CreateDetailForProduct(Product product) private OrderDetail FindDetailByProductSku(string productSku) private bool HasDetailForProductSku(string productSku)

Just read each one of these method signatures. Each method states clearly exactly what it is going to do. Now for each of the method signatures, imagine that you were going to expand the outlining so you can see the method body. What code would you expect to find in each one. Seriously, pause for a moment and think about what you would expect to find in each method body. Got it? Okay, now read the actual implementations of these methods.

public void AddProduct(Product product, int quantity) { var detail = FindOrCreateDetailForProduct(product); detail.Quantity += quantity; } private OrderDetail FindOrCreateDetailForProduct(Product product) { var detail = HasDetailForProductSku(product.Sku) ? FindDetailByProductSku(product.Sku) : CreateDetailForProduct(product); return detail; } private OrderDetail CreateDetailForProduct(Product product) { var detail = new OrderDetail() { Product = product }; this._details.Add(detail); return detail; } private OrderDetail FindDetailByProductSku(string productSku) { var detail = this.Details.Single(d => d.Product.Sku == productSku); return detail; } private bool HasDetailForProductSku(string productSku) { return this.Details.Any(d => d.Product.Sku == productSku); }

I’m sure that some of the implementations are different than you imagined, but are they different in any substantial way? Probably not. A method called HasDetailForProductSku can have only so many syntactic implementations, all of which are semantically identical. The method names and signatures state what our code is doing in English-language terms that are meaningful to a person. The bodies of these methods provide the syntax that is meaningful to the compiler. Class, method, parameter, and variable names are targeted at people. Their purpose is to express the intent of the code to people. Conditionals, loops, and other statements are the basic forms in which we code, but their target audience is the compiler. To put this in the form of a general principle: “Refactor when the semantic meaning of the code is hidden or obscured by the syntax required by the compiler,” or “Refactor when you have to spend too long figuring out what the code does.”

One of the points I tried to make in my talk about TDD yesterday is that TDD is more focused on the clarity and expressiveness of your code than on its actual implementation. I wanted to take a little time and expand on what I meant.

I used a Shopping Cart as an TDD sample. In the sample, the requirement is that as products are added to the shopping cart, the cart should contain a list or OrderDetails that are distinct by product sku. Here is the test I wrote for this case (this is commit #8 if you want to follow along):

[Test] public void Details_AfterAddingSameProductTwice_ShouldDefragDetails() { // Arrange: Declare any variables or set up any conditions // required by your test. var cart = new Lib.ShoppingCart(); var product = new Product() { Sku = "ABC", Description = "Test", Price = 1.99 }; const int firstQuantity = 5; const int secondQuantity = 3; // Act: Perform the activity under test. cart.AddToCart(product, firstQuantity); cart.AddToCart(product, secondQuantity); // Assert: Verify that the activity under test had the // expected results Assert.That(cart.Details.Count, Is.EqualTo(1)); var detail = cart.Details.Single(); var expectedQuantity = firstQuantity + secondQuantity; Assert.That(detail.Quantity, Is.EqualTo(expectedQuantity)); Assert.That(detail.Product, Is.SameAs(product)); }

The naive implementation of AddToCart is currently as follows:

public void AddToCart(Product product, int quantity) { this._details.Add(new OrderDetail() { Product = product, Quantity = quantity }); }

This implementation of AddToCart fails the test case since it does not account for adding the same product sku twice. In order to get to the “Green” step, I made these changes:

public void AddToCart(Product product, int quantity) { if (this.Details.Any(detail => detail.Product.Sku == product.Sku)) { this.Details.First(detail => detail.Product.Sku == product.Sku).Quantity += quantity; } else { this._details.Add(new OrderDetail() { Product = product, Quantity = quantity }); } }

At this point, the test passes, but I think the above implementation is kind of ugly. Having the code in this kind of ugly state is still a value though because now I know I have solved the problem correctly. Let’s start by using Extract Condition on the conditional expression.

public void AddToCart(Product product, int quantity) { var detail = this.Details.SingleOrDefault(d => d.Product.Sku == product.Sku); if (detail != null) { detail.Quantity += quantity; } else { this._details.Add(new OrderDetail() { Product = product, Quantity = quantity }); } }

The algorithm being used is becoming clearer.

- Determine if I have an OrderDetail matching the Product Sku.

- If I do, increment the quantity.

- If I do not, create a new OrderDetail matching the product sku and set it’s quantity.

It’s a pretty simple algorithm. Let’s do a little more refactoring. Let’s apply Extract Method to the lambda expression.

public void AddToCart(Product product, int quantity) { var detail = GetProductDetail(product); if (detail != null) { detail.Quantity += quantity; } else { this._details.Add(new OrderDetail() { Product = product, Quantity = quantity }); } } private OrderDetail GetProductDetail(Product product) { return this.Details.SingleOrDefault(d => d.Product.Sku == product.Sku); }

This reads still more clearly. This is also where I stopped in my talk. Note that it has not been necessary to make changes to the my test case because the changes I have made go to the private implementation of the class. I’d like to go a little further now and say that if I change the algorithm I can actually make this code even clearer. What if the algorithm was changed to:

- Find or Create an OrderDetail matching the product sku.

- Update the quantity.

In the first algorithm, I am taking different action with the quantity depending on whether or not the detail exists. In the new algorithm, I’m demoting the importance of whether the order detail already exists so that I can always take the same action with respect to the quantity. Here’s the naive implementation:

public void AddToCart(Product product, int quantity) { OrderDetail detail; if (this.Details.Any(d => d.Product.Sku == product.Sku)) { detail = this.Details.Single(d => d.Product.Sku == product.Sku); } else { detail = new OrderDetail() { Product = product }; this._details.Add(detail); } detail.Quantity += quantity; }

The naive implementation is a little clearer. Let’s apply some refactoring effort and see what happens.. Let’s apply Extract Method to the entire process of getting the order detail.

public void AddToCart(Product product, int quantity) { var detail = GetDetail(product); detail.Quantity += quantity; } private OrderDetail GetDetail(Product product) { OrderDetail detail; if (this.Details.Any(d => d.Product.Sku == product.Sku)) { detail = this.Details.Single(d => d.Product.Sku == product.Sku); } else { detail = new OrderDetail() { Product = product }; this._details.Add(detail); } return detail; }

This is starting to take shape. However, “GetDetail” does not really communicate that we may be creating a new detail instead of just returning an existing one. If we rename it to FindOrCreateOrderDetailForProduct, we may get that clarity.

public void AddToCart(Product product, int quantity) { var detail = FindOrCreateDetailForProduct(product); detail.Quantity += quantity; } private OrderDetail FindOrCreateDetailForProduct(Product product) { OrderDetail detail; if (this.Details.Any(d => d.Product.Sku == product.Sku)) { detail = this.Details.Single(d => d.Product.Sku == product.Sku); } else { detail = new OrderDetail() { Product = product }; this._details.Add(detail); } return detail; }

AddToCart() looks pretty good now. It’s easy to read, and each line communicates the intent of our code clearly. FindOrCreateDetailForProduct() on the other hand is less easy to read. I’m going to apply Extract Conditional to the if statement, and Extract Method to each side of the expression. Here is the result:

private OrderDetail FindOrCreateDetailForProduct(Product product) { var detail = HasProductDetail(product) ? FindDetailForProduct(product) : CreateDetailForProduct(product); return detail; } private OrderDetail CreateDetailForProduct(Product product) { var detail = new OrderDetail() { Product = product }; this._details.Add(detail); return detail; } private OrderDetail FindDetailForProduct(Product product) { var detail = this.Details.Single(d => d.Product.Sku == product.Sku); return detail; } private bool HasProductDetail(Product product) { return this.Details.Any(d => d.Product.Sku == product.Sku); }

Now I’ve noticed that HasProductDetail and FindDetailForProduct are only using the product sku. I’m going to change the signature of these methods to accept only the sku, and I’ll change the method names accordingly.

public void AddToCart(Product product, int quantity) { var detail = FindOrCreateDetailForProduct(product); detail.Quantity += quantity; } private OrderDetail FindOrCreateDetailForProduct(Product product) { var detail = HasDetailForProductSku(product.Sku) ? FindDetailByProductSku(product.Sku) : CreateDetailForProduct(product); return detail; } private OrderDetail CreateDetailForProduct(Product product) { var detail = new OrderDetail() { Product = product }; this._details.Add(detail); return detail; } private OrderDetail FindDetailByProductSku(string productSku) { var detail = this.Details.Single(d => d.Product.Sku == productSku); return detail; } private bool HasDetailForProductSku(string productSku) { return this.Details.Any(d => d.Product.Sku == productSku); }

At this point, the AddToCart() method has gone through some pretty extensive refactoring. The basic algorithm has been changed, and the implementation of the new algorithm has been changed a lot. Now let me point something out: At no time during any of these changes did our test fail, and at no time during these changes did our test fail to express the intended behavior of the class. We made changes to every aspect of the implementation: We changed the order of the steps in the algorithm. We constantly added and renamed methods until we had very discrete well-named functions that stated explicitly what the code is doing. The unit test remained a valid expression of intended behavior despite all of these changes. This is what it means to say that a test is more about API than implementation. The unit-test should not depend on the implementation, nor does it necessarily imply a particular implementation.

Happy Coding!

Tomorrow I will be giving a talk on TDD at CMAP. The demo code and outline I will be using can be found on bitbucket here.

Here is the outline for the talk:

- I. Tools

- A. Framework

- B. Test Runner

- C. Brains

- II. Test Architecture

- A. Test Fixture

- B. Setup

- C. Test Method

- D. TearDown

- III. Process

- A. Red

- B. Green.

- C. Refactor.

- D. Rinse and Repeat.

- IV. Conventions

- A. At least one testfixture per class.

- B. At least one test method per public method.

- C. Test Method naming conventions

- i. MethodUnderTest_ConditionUnderTest_ExpectedResult

- D. Test Method section conventions

- i. Arrange

- ii. Act

- iii. Assert

- V. Other Issues

- A. Productivity Study

- B. Testing the UI

- i. Not technically possible without more tooling/infrastructure

- ii. MVC patterns increate unit-test coverage.

- iii. Legacy code.

- a. Presents special problems.

- b. Touching untested legacy code is dangerous.

- c. Boy-Scout rule.

- d. Use your own judgment

- C. Pros and Cons

- i. Pros

- a. Quality.

- b. Encourage a more loosely-coupled design.

- c. Document the work that is done.

- d. Regression testing.

- e. Increased confidence in working code means changes are easier to make.

- f. Encourages devs to think about code in terms of API instead of implementation.

- 1. Makes code more readable.

- 2. Readable code communicates intent more clearly.

- 3. Readable code reduces the need for additional non-code documentation.

- ii. Cons

- a. Takes longer to develop.

- b. Test code must be maintained as well.

- c. Requires that devs adapt to new ways of thinking about code.

- D. Notes

- i. You’re already doing it.

- ii. "The Art of Unit Testing" by Roy Osherove

- iii. "Clean Code" by Robert C. Martin

- iv. "Head First Design Patterns” by Elizabeth and Eric Freeman, Bert Bates, and Kathy Sierra

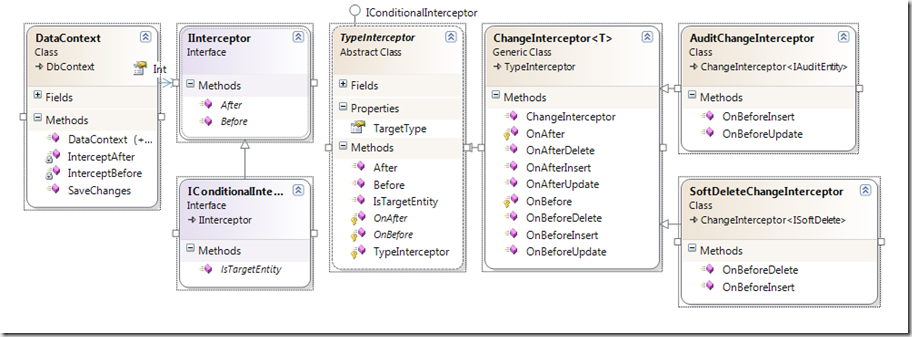

Interceptors are a great way to handle some repetitive and predictable data management tasks. NHibernate has good support for Interceptors both at the change and query levels. I wondered how hard it would be to write interceptors for the new EF4 CTP and was surprised at how easy it actually was… well, for the change interceptors anyway. It looks like query interceptors would require a complete reimplementation of the Linq provider—not something I feel like undertaking right now.

On to the Code!

This is the first interface we’ll use to create a class that can respond to changes in the EF4 data context.

- namespace Yodelay.Data.Entity

- {

- /// <summary>

- /// Interface to support taking some action in response

- /// to some activity taking place on an ObjectStateEntry item.

- /// </summary>

- public interface IInterceptor

- {

- void Before(ObjectStateEntry item);

- void After(ObjectStateEntry item);

- }

- }

We’ll also use this interface to add some conditional execution support.

- namespace Yodelay.Data.Entity

- {

- /// <summary>

- /// Adds conditional execution to an IInterceptor.

- /// </summary>

- public interface IConditionalInterceptor : IInterceptor

- {

- bool IsTargetEntity(ObjectStateEntry item);

- }

- }

The first interceptor I want to write is one that manages four audit columns automatically. First I need an interface that provides the audit columns:

- public interface IAuditEntity

- {

- DateTime InsertDateTime { get; set; }

- DateTime UpdateDateTime { get; set; }

- string InsertUser { get; set; }

- string UpdateUser { get; set; }

- }

The EF4 DbContext class provides an override for SaveChanges() that I can use to start handling the events. I decided to subclass DbContext and add the interception capability to the new class. I snipped the constructors for brevity, but all of the constructors from the base class are bubbled.

- public class DataContext : DbContext

- {

- private readonly List<IInterceptor> _interceptors = new List<IInterceptor>();

- public List<IInterceptor> Interceptors

- {

- get { return this._interceptors; }

- }

- private void InterceptBefore(ObjectStateEntry item)

- {

- this.Interceptors.ForEach(intercept => intercept.Before(item));

- }

- private void InterceptAfter(ObjectStateEntry item)

- {

- this.Interceptors.ForEach(intercept => intercept.After(item));

- }

- public override int SaveChanges()

- {

- const EntityState entitiesToTrack = EntityState.Added |

- EntityState.Modified |

- EntityState.Deleted;

- var elementsToSave =

- this.ObjectContext

- .ObjectStateManager

- .GetObjectStateEntries(entitiesToTrack)

- .ToList();

- elementsToSave.ForEach(InterceptBefore);

- var result = base.SaveChanges();

- elementsToSave.ForEach(InterceptAfter);

- return result;

- }

I only want the AuditChangeInterceptor to fire if the object implements the IAuditEntity interface. I could have directly implemented IConditionalInterceptor, but I decided to extract the object-type criteria into a super-class.

- public abstract class TypeInterceptor : IConditionalInterceptor

- {

- private readonly System.Type _targetType;

- public Type TargetType { get { return _targetType; }}

- protected TypeInterceptor(System.Type targetType)

- {

- this._targetType = targetType;

- }

- public virtual bool IsTargetEntity(ObjectStateEntry item)

- {

- return item.State != EntityState.Detached &&

- this.TargetType.IsInstanceOfType(item.Entity);

- }

- public void Before(ObjectStateEntry item)

- {

- if (this.IsTargetEntity(item))

- this.OnBefore(item);

- }

- protected abstract void OnBefore(ObjectStateEntry item);

- public void After(ObjectStateEntry item)

- {

- if (this.IsTargetEntity(item))

- this.OnAfter(item);

- }

- protected abstract void OnAfter(ObjectStateEntry item);

- }

I also decided that the super-class should provide obvious method-overrides for BeforeInsert, AfterInsert, BeforeUpdate, etc.. For that I created a generic class that sub-classes TypeInterceptor and provides friendlier methods to work with.

- public class ChangeInterceptor<T> : TypeInterceptor

- {

- #region Overrides of Interceptor

- protected override void OnBefore(ObjectStateEntry item)

- {

- T tItem = (T) item.Entity;

- switch(item.State)

- {

- case EntityState.Added:

- this.OnBeforeInsert(item.ObjectStateManager, tItem);

- break;

- case EntityState.Deleted:

- this.OnBeforeDelete(item.ObjectStateManager, tItem);

- break;

- case EntityState.Modified:

- this.OnBeforeUpdate(item.ObjectStateManager, tItem);

- break;

- }

- }

- protected override void OnAfter(ObjectStateEntry item)

- {

- T tItem = (T)item.Entity;

- switch (item.State)

- {

- case EntityState.Added:

- this.OnAfterInsert(item.ObjectStateManager, tItem);

- break;

- case EntityState.Deleted:

- this.OnAfterDelete(item.ObjectStateManager, tItem);

- break;

- case EntityState.Modified:

- this.OnAfterUpdate(item.ObjectStateManager, tItem);

- break;

- }

- }

- #endregion

- public virtual void OnBeforeInsert(ObjectStateManager manager, T item)

- {

- return;

- }

- public virtual void OnAfterInsert(ObjectStateManager manager, T item)

- {

- return;

- }

- public virtual void OnBeforeUpdate(ObjectStateManager manager, T item)

- {

- return;

- }

- public virtual void OnAfterUpdate(ObjectStateManager manager, T item)

- {

- return;

- }

- public virtual void OnBeforeDelete(ObjectStateManager manager, T item)

- {

- return;

- }

- public virtual void OnAfterDelete(ObjectStateManager manager, T item)

- {

- return;

- }

- public ChangeInterceptor() : base(typeof(T))

- {

- }

- }

Finally, I created subclassed ChangeInterceptor<IAuditEntity>.

- public class AuditChangeInterceptor : ChangeInterceptor<IAuditEntity>

- {

- public override void OnBeforeInsert(ObjectStateManager manager, IAuditEntity item)

- {

- base.OnBeforeInsert(manager, item);

- item.InsertDateTime = DateTime.Now;

- item.InsertUser = System.Threading.Thread.CurrentPrincipal.Identity.Name;

- item.UpdateDateTime = DateTime.Now;

- item.UpdateUser = System.Threading.Thread.CurrentPrincipal.Identity.Name;

- }

- public override void OnBeforeUpdate(ObjectStateManager manager, IAuditEntity item)

- {

- base.OnBeforeUpdate(manager, item);

- item.UpdateDateTime = DateTime.Now;

- item.UpdateUser = System.Threading.Thread.CurrentPrincipal.Identity.Name;

- }

- }

I plugged this into my app, and it worked on the first go.

Another common scenario I encounter is “soft-deletes.” A “soft-delete” is a virtual record deletion that does not actual remove the record from the database. Instead it sets an IsDeleted flag on the record, and the record is then excluded from other queries. The problem with soft-deletes is that developers and report writers always have to remember to add the “IsDeleted == false” criteria to every query in the system that touches the affected records. It would be great to replace the standard delete functionality with a soft-delete, and to modify the IQueryable to return only records for which “IsDeleted == false.” Unfortunately, I was unable to find a clean way to add query-interceptors to the data model to keep deleted records from being returned. However, I was able to get the basic soft-delete ChangeInterceptor to work. Here is that code.

- public interface ISoftDelete

- {

- bool IsDeleted { get; set; }

- }

- public class SoftDeleteChangeInterceptor : ChangeInterceptor<ISoftDelete>

- {

- public override void OnBeforeInsert(ObjectStateManager manager, ISoftDelete item)

- {

- base.OnBeforeInsert(manager, item);

- item.IsDeleted = false;

- }

- public override void OnBeforeDelete(ObjectStateManager manager, ISoftDelete item)

- {

- if (item.IsDeleted)

- throw new InvalidOperationException("Item is already deleted.");

- base.OnBeforeDelete(manager, item);

- item.IsDeleted = true;

- manager.ChangeObjectState(item, EntityState.Modified);

- }

- }

Here’s the complete diagram of the code:

EF4 has come a long way with respect to supporting extensibility. It still needs query-interceptors to be feature-parable with other ORM tools such as NHibernate, but I suspect that it is just a matter of time before the MS developers get around to adding that functionality. For now, you can use the interceptor model I’ve demo’ed here to add functionality to your data models. Perhaps you could use them to add logging, validation, or security checks to your models. What can you come up with?

My “Introduction to Test Driven Development” talk was accepted for the Fall 2010 CMAP! From the talk description:

This talk will demonstrate the basics of writing unit-tests, as well as how Test Driven Development solves many common software development problems. We will cover some of the research that has been done on the effectiveness of TDD. We will also deal some of the more common questions and concerns surrounding TDD such as productivity, testing the UI, and testing legacy code.

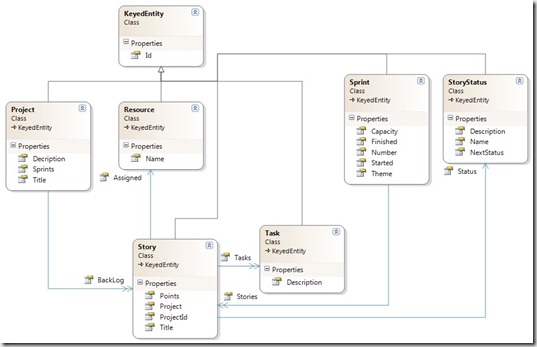

Configuring ORM’s through fluent api calls is relatively new to me. For the last three years or so I’ve been using EF1 and Linq-To-Sql as my data modeling tools of choice. My first exposure to a code-first ORM tool came in June when I started working with Fluent NHibernate. As interesting as that has been, I hadn’t really be faced with the issue of proper configuration because I’ve had someone on our team that does it easily. This weekend I started working on a sample project using the EF4 CTP, and the biggest stumbling block has been modeling the relationships.

The context code is a SCRUM process management app I’m writing. Here’s the model:

The relationship I tried to model was the one between Project and Story via the Backlog property. Since “Project” is the first entity I needed to write, my natural inclination was to model the relationship to “Story” first.

- public class DataContext : DbContext

- {

- public DbSet<Project> Projects { get; set; }

- public DbSet<Resource> Resources { get; set; }

- public DbSet<Sprint> Sprints { get; set; }

- public DbSet<Story> Stories { get; set; }

- public DbSet<StoryStatus> StoryStatuses { get; set; }

- public DbSet<Task> Tasks { get; set; }

- protected override void OnModelCreating(System.Data.Entity.ModelConfiguration.ModelBuilder builder)

- {

- base.OnModelCreating(builder);

- builder

- .Entity<Project>()

- .HasMany(e => e.BackLog);

I knew that “Story” would be slightly more complex because it has two properties that map back to “Project.” These are the “Project” property ,and the “ProjectId” property. Some of the EF4 samples I found refer to a “Relationship” extension method that I was unable to find in the API, so I was fairly confused. I finally figured out what I needed to do by reading this post from Scott Hanselman, though he doesn’t specifically highlight the question I was trying to answer.

This is the mapping code I created for “Story:”

- builder.Entity<Story>()

- .HasRequired(s => s.Project)

- .HasConstraint((story, project) => story.ProjectId == project.Id);

and this is the code I’m using to create a new Story for a project:

- [HttpPost]

- public ActionResult Create(Story model)

- {

- using (var context = new DataContext())

- {

- var project = context.Projects.Single(p => p.Id == model.ProjectId);

- project.BackLog.Add(model);

- context.SaveChanges();

- return RedirectToAction("Backlog", "Story", new {id = project.Id});

- }

- }

When I tried to save the story, the data context threw various exceptions. I thought I could avoid the problem by rewriting the code so that I was just adding the story directly to the “Stories” table on the DataContext (which I think will ultimately be the right thing as it saves an unnecessary database call), but that would have been hacking around the problem and not really understanding what was wrong with what I was doing. It just took some staring at Scott Hanselman’s code sample for awhile to realize what was wrong with my approach. Before I explain it, let me show you the mapping code that works.

- protected override void OnModelCreating(System.Data.Entity.ModelConfiguration.ModelBuilder builder)

- {

- base.OnModelCreating(builder);

- builder

- .Entity<Project>()

- .Property(p => p.Title)

- .IsRequired();

- // api no longer has Relationship() extension method.

- builder.Entity<Story>()

- .HasRequired(s => s.Project)

- .WithMany(p => p.BackLog)

- .HasConstraint((story, project) => story.ProjectId == project.Id);

- }

Notice what’s missing? I completely yanked the mapping of “Project->Stories” from the “Project” model’s perspective. Instead, I map the one-to-many relationship from the child-entity’s perspective, i.e., “Story.” Here’s how to read the mapping.

- // api no longer has Relationship() extension method.

- builder.Entity<Story>() // The entity story

- .HasRequired(s => s.Project) // has a required property called "Project" of type "Project"

- .WithMany(p => p.BackLog) // and "Project" has a reference back to "Story" through it's "Backlog" collection property

- .HasConstraint((story, project) => story.ProjectId == project.Id); // and Story.ProjectId is the same as Story.Project.Id

- ;

The key here is understanding that one-to-many relationships must be modeled from the perspective of the child-entity.